Amazon aims to improve biometric features and privacy with new edge AI chip in Echo devices

A new processor in Amazon’s latest generation of Echo devices are giving the Alexa assistant intriguing capabilities that the company say offer consumers a more natural experience of speech-based interaction. There’s also plenty of scientific research that’s gone into sound localization and computer vision to offer new features without creating new biometric data storage and privacy problems—and device edge processing is the key.

At the Fall 2020 Devices and Services announcement from Amazon, drones flying around the home and a new online gaming service garnered much of the attention. The company’s debut of new Echo devices, however, was more significant in terms of biometrics-related developments.

Inside the globe-shaped Echo and the brand-new Echo Show 10, the AZ1 Neural Edge processor is tasked with running new and updated speech and computer vision algorithms.

“In speech processing milliseconds matter,” said Miriam Daniel, vice president of Amazon Echo during the product rollout event. “Imagine asking Alexa to turn on the light, and there’s a slight delay in the light coming on—that would make customers really impatient.

“Our team worked really hard to shave off hundreds of milliseconds from Alexa’s response time, [so] they invented the all new AZ1 neural edge processor,” Daniel said. The silicon module has been purpose-built to run machine learning algorithms on the edge, she noted.

(The interior of the 4th Gen Echo. Source: Amazon)

Rohit Prasad, vice president and head scientist for Alexa, said “The goal with Alexa is to make interacting with it as natural as it is to speak to humans,” and further noted that advancements in AI are bringing Amazon closer to that vision. Among the current capabilities are the use of feedback search algorithms to take user feedback (“Alexa, that’s wrong”) and use interactions to correct the mistake in action. A new ability is to teach the Alexa assistant directly by speech rather than through a mobile app or online portal to set up new functions.

On the new Echo Show 10, the display and camera are able to change direction and aim at the current speaker in a room in an effort to make for a more natural interaction during video calls. This is useful when someone is moving about a room while talking or viewing video, but it turns out that it is rather challenging to do this without storing biometric data or personally identifiable information in the form of faces and voices.

“We’re not doing [this] with facial recognition; we’re doing that just understanding sort of the form of what a human being looks like and triangulating on that,” explained Dave Limp, senior vice president of devices and services at Amazon. “The cool thing about the technology is it’s all running locally. And so none of this goes to the cloud; it’s all done locally on that neural processor and it never leaves the device,” he added.

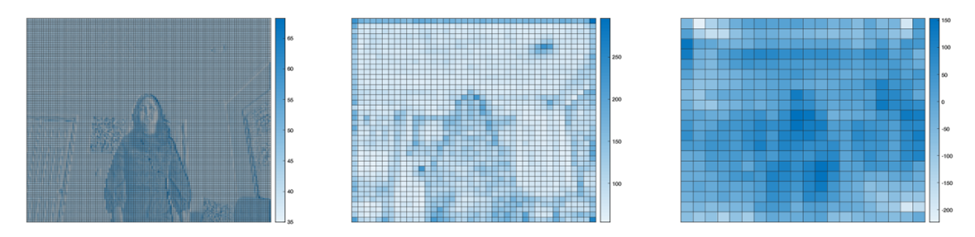

(A visualization of the non-reversible process Echo 10 uses to convert images into a higher-level abstraction to support motion. Source: Amazon)

The AZ1 processor is used in a novel way to understand the direction the voices are coming from and decide where, when and how fast to adjust the camera. According to a post on the Amazon Science blog, the Echo Show 10 uses sound source localization (SSL) with computer vision (CV) to identify objects and humans in the field of view and figure out which sounds are coming from people, and which are merely sounds reflecting off walls.

Details of Amazon’s new chip

The chip was designed in collaboration with MediaTek. MediaTek’s MT8512 forms the basis for the processor, having been design for “high-end audio processing and voice assistant applications,” according to MediaTek.

The MT8512 integrates a 2GHz dual-core CPU, support for a wide variety of peripheral connectivity dedicated to ultra-high-quality audio processing, as well as Bluetooth 5.0 and Wi-Fi 5 dual-band connectivity. MediaTek notes that a high-performance voice DSP (digital signal processor) is included for fast and accurate wake-word and keyword detection in vocal commands; the DSP works in conjunction with the AZ1 Neural Edge processor “to provide the most responsive Alexa experience,” according to MediaTek.

Additionally, the chip is made using a 12 nanometer (nm) process; for comparison, the absolute state-of-the art is 5nm, while many mainstream processors from Intel used in laptop and desktop PCs are made with a 14nm process. Generally speaking, the smaller transistors are, the more of them can be packed into the same “package” space and offer improved energy efficiency. In other words, for use in low cost standalone devices, the MediaTek chips look to provide a good balance between power, efficiency and unit cost.

Article Topics

AI chips | Amazon | biometric data | biometrics | biometrics at the edge | computer vision | data storage | machine learning | privacy | speech recognition

Comments