Australian HRC calls for partial facial recognition moratorium, new AI oversight

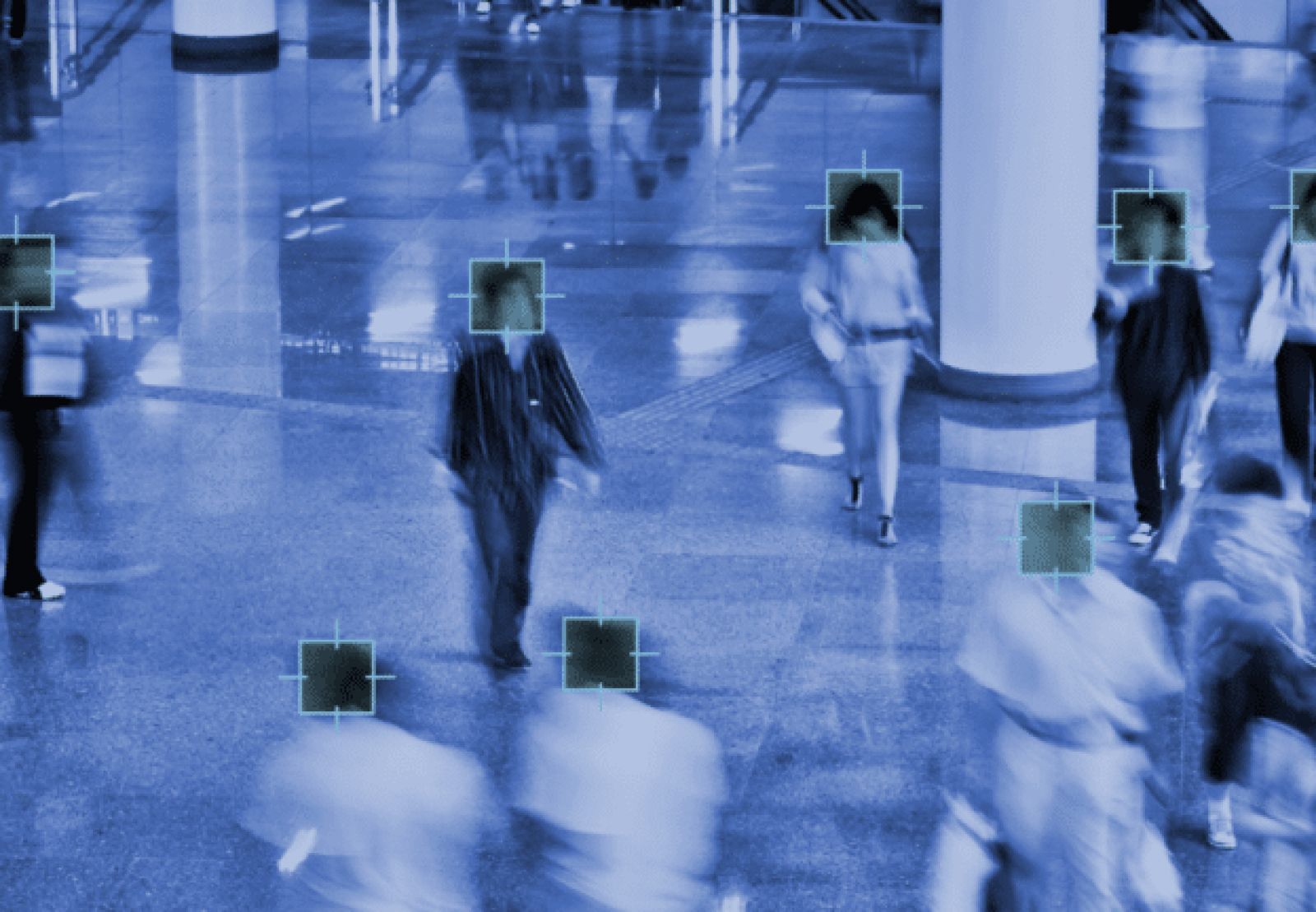

The Australian Human Rights Commission is calling for a moratorium on the use of facial recognition in “high-risk” settings, as well as the establishment of a new AI Safety Commissioner for the country, reports ZDNet.

A 240-page report from the AHRC was written by outgoing Human Rights Commissioner Edward Santow, makes 38 different recommendations. Many of them relate to the accessibility of technology for people with disabilities. An entire section is dedicated to artificial intelligence, however, including a subsection on biometrics, which gives particular attention to facial recognition. That section includes three of the recommendations.

Australian governments at the federal and state and territory levels should write legislation for biometrics and facial recognition that protects human rights, and put a moratorium in place until it that legislation is enacted, according to the report.

The report also recommends a statutory cause of action be introduced by the government for serious invasions of privacy.

The report considers the importance of purpose limitation, and the importance and limitations of consent as a data protection mechanism.

It notes that stakeholders raised both benefits and risks of facial recognition in consultation. The risks of increasing surveillance, the use of data derived from facial recognition for profiling, and errors from demographic disparities are identified as three main concerns.

Private and public sector uses of facial recognition are considered, and the Bridges case taken as a case study.

The report also sets out the basics of a consultative process for policy-makers to use in crafting legislation, during which the moratorium would be in place.

“This moratorium would not apply to all uses of facial and biometric technology,” the report clarifies. “It would apply only to uses of such technology to make decisions that affect legal or similarly significant rights, unless and until legislation is introduced with effective human rights safeguards.”

International developments are considered, and finally a reformed legal framework that requires consideration of the type of biometric technology used, the context or decision that the technology is being deployed to contribute to, and what protections are in place to address potential harm. This section notes that “one-to-many facial recognition presents a greater risk to individuals’ human rights than one-to-one systems.”

Brookings considers facial recognition fairness mandates for law enforcement

Mark MacCarthy, nonresident senior fellow in Governance Studies for the Brookings Institute’s Center for Technology Innovation delves into what specifically “reasonable use” means for face biometrics use by law enforcement in a blog post.

In reviewing the policy landscape, including Washington state’s industry-supported legislation, MacCarthy warns that “it may be too late for a more balanced regulatory approach.”

He notes that NIST assessments of face biometric algorithms with 0.1 percent error rates are considering high-quality images captured in good conditions. Error rates as high as 20 percent for real world settings, combined with true matches that miss the operational threshold, bring into question the effectiveness of the systems, the post suggests.

Five recommendations for assessments of the biometric accuracy and fairness of law enforcement facial recognition systems are offered, and the need for both further regulation and analysis is emphasized.

Article Topics

AI | Australia | biometric identification | biometrics | data protection | ethics | facial recognition | legislation | privacy | regulation | surveillance | United States

Comments