CMU-Africa researchers develop biometric PAD bias reduction methods and metrics

A team of researchers at Carnegie Mellon University Africa (CMU-Africa) in Rwanda have developed a new approach to making biometric presentation attack detection (PAD) resistant to demographic bias.

Their paper on “Fairness-aware face presentation attack detection using local binary patterns: Bridging skin tone bias in biometric systems” focusses on demographic bias affecting African people, and has been published by the Journal of Cybersecurity and Privacy.

The researchers chose local binary patterns (LBPs), a type of visual descriptor used in computer vision for classifying subjects, as a basis for their PAD system in part due to their effectiveness in resource-constrained environments. They used a large dataset compiled by the Chinese Academy of Sciences (CASIA-SURF CeFA) to train, test and validate the algorithms they developed.

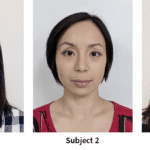

The innovation they present is the use of an “ethnicity-aware preprocessing approach.” This involves the targeted application of adaptive brightness settings and gamma correction optimization for the reflective properties of different skin tones, addressing a long-recognized challenge in image quality.

“Current methods address fairness through post hoc corrections or data augmentation, but they fail to address the fundamental challenge of ensuring optimal image quality across different skin tones before feature extraction,” the researchers explained in the paper.

Following feature extraction, the researchers used an SGD (stochastic gradient descent) classifier and balanced class weighting to prevent “systemic bias toward the majority class without requiring data reduction or augmentation.” And group-specific threshold optimization were implemented to limit the equal error rate (EER).

The researchers also developed a statistical framework for evaluating the fairness of PAD algorithms, which includes three novel methods. A coefficient of Variation (CoV) analysis provides a method for evaluating the trade-off between security and fairness. McNemar’s statistical significance testing indicates whether performance differences found are statistically significant or noise. And bootstrap confidence intervals quantify the uncertainty built into performance estimates by resampling with 1,000 iterations to generate 95 percent confidence intervals across ethnic groups.

The changes reduced the difference in accuracy between African and East Asian subjects from just over 3 percent to 0.75 percent, according to the paper.

“The methodology demonstrates that demographic fairness can be secured through algorithmic revisions instead of being reliant on massive data alteration or special-purpose hardware, which makes fair PAD systems easier to use more routinely in different contexts of deployment,” the CMU-Africa researchers conclude.

Article Topics

biometric bias | biometric data quality | biometric liveness detection | Carnegie Mellon University Africa (CMU-Africa) | demographic fairness | face biometrics | presentation attack detection

Comments