New research shows MLLMs can boost biometric morph attack detection

A group of Slovenian researchers has introduced a new way to enhance single-image morphing attack detection (S-MAD) systems with the help of open-source Multimodal Large Language Models (MLLMs), which they say outperform widely used MAD models in detecting biometric spoofs.

The findings point to a promising future direction in which MLLM-based MAD systems could become “trustworthy, explainable, and operationally viable tools” for biometric security applications, according to the researchers from the University of Ljubljana and the Jožef Stefan Institute (JSI).

“We hope this work contributes to more robust, explainable, and trustworthy biometric systems, and encourages further exploration of multimodal foundation models in digital forensics,” says Vitomir Štruc, a professor at the University of Ljubljana and one of the co-authors of the report.

The paper, titled Exploring Multimodal Large Language Models for Morphing Attack Detection, was presented at the 29th Computer Vision Winter Workshop in Czechia earlier in February.

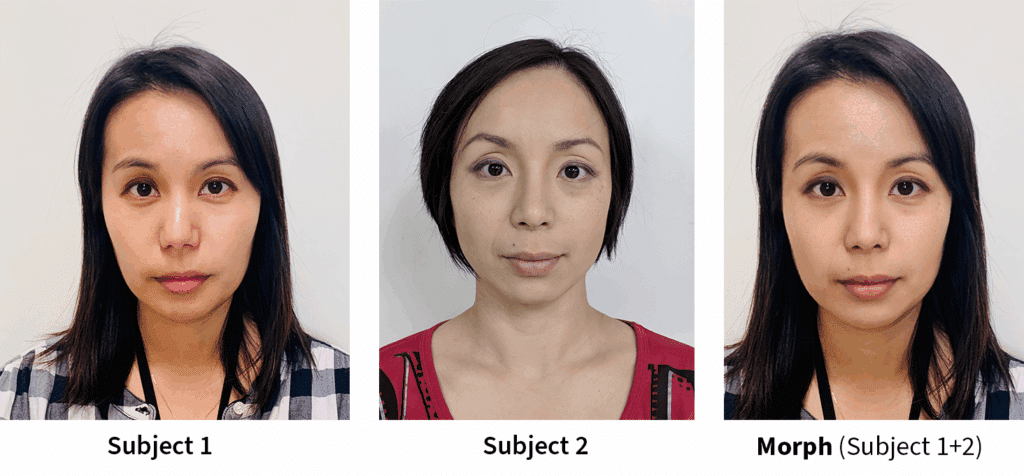

Face morphing attacks, which blend facial images of two individuals into a single composite, have become a serious threat to biometric security systems, including those used at border crossings to match passports with faces.

To catch the fraudsters, systems that use morphing attack detection compare the reference image with the morphed image or analyze only the morphed image. The latter is called single-image MAD and the technique is particularly useful in real-world scenarios such as border control, where reference images are often unavailable.

Single-image morphing attack detection systems, however, often suffer from poor cross-dataset generalization, meaning they perform well on data similar to their training set but fail when tested on images from different sources or under different capture conditions. They also operate as opaque “black boxes.”

This is particularly problematic in high-stakes scenarios such as border control, the researchers say.

The paper explored using S-MAD with MLLMs, AI systems trained on both text and images that can understand and reason about visual content using natural language. The MLLMs were adopted under strict cross-dataset evaluation through two different approaches.

Selected MLLMs were assessed in zero-shot settings using a structured forensic prompting framework, which elicits multi-step semantic analysis with human-readable regional attributions. This highlights specific areas of the image contributing to the decision, making the model’s reasoning transparent and verifiable, according to the paper.

After that, the researchers used the LoRA approach (Low-Rank Adaptation) and a synthetic training dataset of morphs to adapt the best-performing MLLM to a MAD task in an “efficient, generalizable, and privacy-preserving manner.”

“Our experimental results show that the proposed prompting strategy significantly improves overall attack detection accuracy compared to naive prompting,” the report notes. “Moreover, our LoRA-adapted MLLM, Gemma-3 12B, achieves an average equal error rate (EER) of 14.81 percent across various morphing attack benchmarks, outperforming widely used MAD models.”

Morphing attacks have become a focus of research across Europe, including Germany. The EU has invested around 20 million euros (US$23.4 million) in research into biometric morphing detection, including the Fidelity, SOTAMD and iMARS projects.

Article Topics

biometrics | biometrics research | face biometrics | face morphing | morphing attack | spoof detection

Comments