Biometrics researchers play too fast and loose with flashy findings — study

As a rule, the more huffery in coverage of exciting biometric research, the more puffery will be found when really analyzing the research.

Case in point: A team of researchers found unabashedly credulous coverage in popular media of almost any lab work involving adversarial machine-learning attacks against biometric surveillance systems.

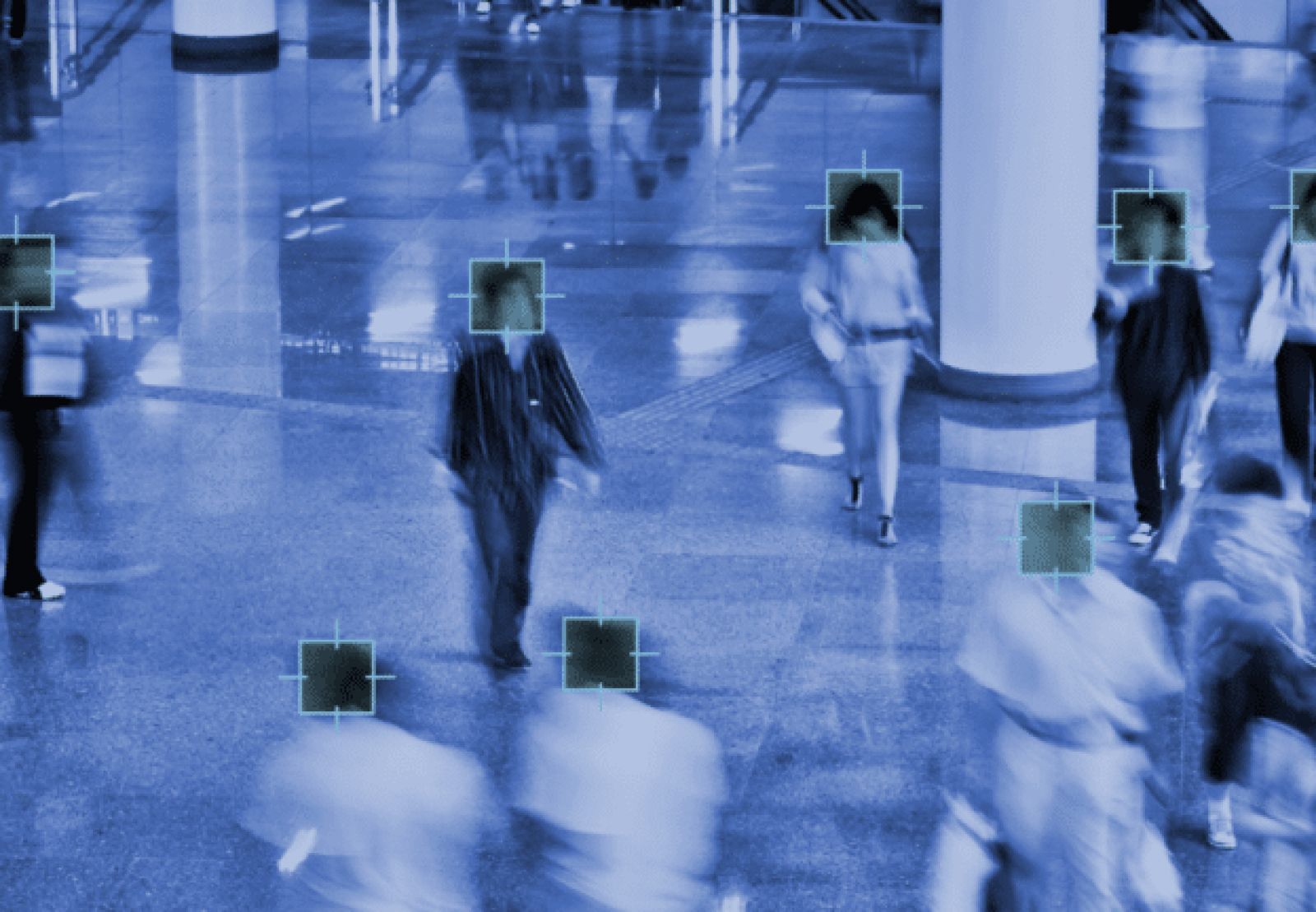

The researchers focused on developments promising invisibility cloaks and masks that might hide people from computer vision systems feeding data to facial recognition algorithms. Their work was posted in the preprint Arxiv repository.

Regardless of how many qualifiers are used in research papers, too many in the media think only of how high the news will climb in search engine results.

And the only thing rarer in research than an adequately funded study is an organization demanding corrections to articles that make its scientists look more like geniuses than they may be.

While much of the blame falls on consumer-interest editors, the researchers investigating this symbiotic relationship say organizations are playing cute with less-than-challenging investigatory processes.

In fact, according to the new paper, they are not publishing based on “actual (sic) real world testing.”

They are not conducting extensive human subjects research, according to the team boasting researchers from Microsoft, software developer Citizen Lab, Harvard University and Swarthmore College.

They wrote: “We found the physical or real-world testing conducted was minimal, provided few details about testing subjects and was often conducted as an afterthought or demonstration.” Performance and reliability outside the laboratory are inadequately tested.

For good measure, they said future research in facial recognition and other surveillance research should find subjects from the populations most often targeted by the biometric surveillance systems: people of color, those other than cisgendered individuals, and sex workers.

Article Topics

biometric identification | biometrics | biometrics research | computer vision | facial recognition | machine learning | surveillance

Comments