Dialogue informing biometrics regulation just getting started, says Ada Lovelace panel

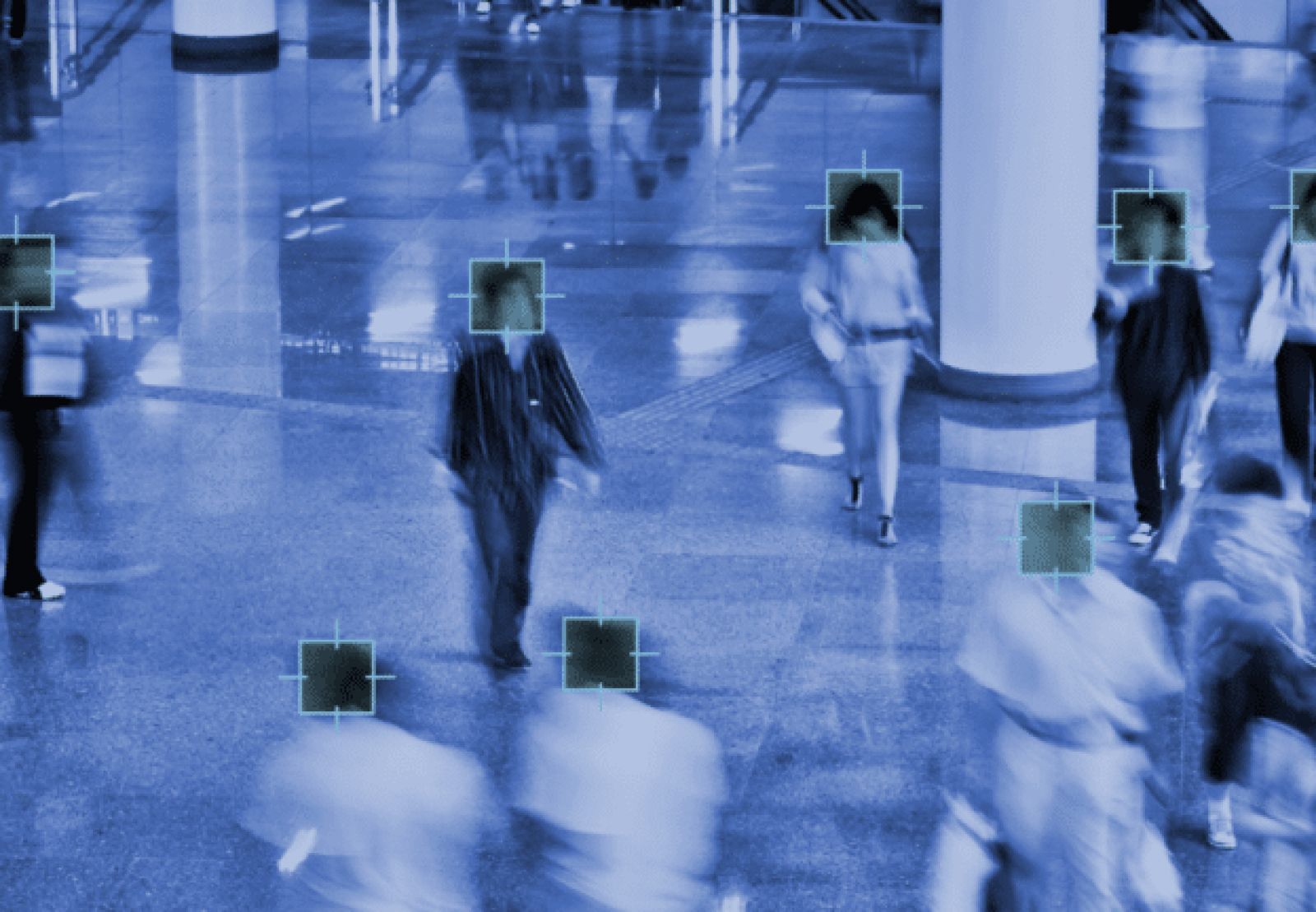

The role of ethics, law, and public comfort in the development of regulation and governance for biometrics, and facial recognition in particular, was explored in an online event hosted by the Ada Lovelace Institute this week.

The event was moderated by Institute Chair Imogen Parker, and its panel made up of AI Now Institute Director of Global Policy and Programmes Amba Kak, Future of Privacy Forum Senior Counsel and Director of AI and Ethics Brenda Leong, and UK Biometrics and Surveillance Camera Commissioner Fraser Sampson.

Parker began by noting the Institute’s focus on observing and reporting how the public perceives the fair use of biometrics, as well as legal review.

The approaches taken by different jurisdiction to regulating biometrics were explored, along with next steps and the considerations that should go into them.

Kak critiqued “a broadly uncritical acceptance or normalization” of the use of biometrics, underpinned by assumptions about accuracy and benefit which are being challenged by some researchers and civil society groups.

Data protection regulations remain the main mechanism of limitation or redress under law, but Kak also invokes potential for harms based on inaccuracy or misuse. Kak notes that analysis like emotion recognition is also defined as biometrics in some jurisdictions, somewhat controversially.

The AI Now Institute’s 2020 report on ‘Regulating Biometrics’ found that proportionality disputes are generally considered against nebulous public security criteria, which tends to result in application approval, Kak points out.

The EU’s recent AI guidance is criticized as driving attention to a narrow band of biometric applications, potentially leaving out many egregious uses.

Leong reviewed the U.S. regulatory landscape, including the importance of an Illinois court ruling that commercial insurance policies cover the state’s biometric data privacy law without explicitly saying so. She sees voice biometrics as a looming challenge to regulation and rights protection.

Sampson said that automated facial recognition has dominated “literally every conversation and email since I arrived in March,” and notes the creation of a new forensic science regulator for England and Wales as a step forward for oversight of law enforcement biometrics.

The consequences associated with a given biometrics use case are key to setting appropriate regulations for different uses. Drones, doorbells, and body-worn cameras have taken a lot of Sampson’s attention so far. He has also worked to promote the voluntary Surveillance Camera Code certification program, and discussed its place within the regulatory and oversight landscape during the event.

The future of biometrics use, he says, will be defined based on what is socially acceptable, rather than what is technically possible, or legally permissible.

That would represent a significant shift in governance.

Where ethical acceptability fits into the biometrics regulation picture was discussed, along with the proliferation of unproven AI facial analysis technologies.

Regulators, civil society groups weigh in

Canada’s Privacy Commissioner has ruled that the country’s national police (RCMP) violated the Privacy Act with its use of Clearview AI’s facial recognition, a finding disputed by the force.

Hundreds of searches were conducted with Clearview, the Commissioner’s investigation found, to match suspects against the biometric records of “massive repositories of Canadians who are innocent of any suspicion of crime,” Commissioner Daniel Therrien says.

The RCMP has ultimately agreed to implement the recommendations of the Office of the Privacy Commissioner to improve its policies, systems and training. The police force will also create a new oversight function to ensure personal information is only collected and used in accordance with the law.

The Office has also issued privacy guidance on facial recognition for police agencies in the country, in collaboration with provincial and territorial counterparts. Public consultation on the guidance is sought until the comment period closes on October 15, 2021.

The Biometric Services Gateway used for mobile scans by UK police is criticized for a lack of public consultation prior to roll-out and inconsistent application between forces in a new report co-authored by Racial Justice Network UK. Worse, the ‘Stop the Scan’ report found evidence of systemic racial bias in every police force providing race data, with Arabic and Black and Asian people much more likely to be subjected to mobile biometrics scans than Caucasians.

An analysis from the Project on Government Oversight (POGO), meanwhile, emphasizes the importance of regulation beyond law enforcement and government agencies.

The threat of biometrics-powered stalking, harassment, and vigilantism are compounded with the availability of tools like Pimeyes, Jake Laperruque argues. He also notes the problems caused by Uber’s implementation of face authentication for its drivers in London.

The recently-introduced Fourth Amendment Is Not For Sale Act would be a significant step forward, according to Laperruque.

Article Topics

Ada Lovelace Institute | biometric identification | Biometric Services Gateway | biometrics | Clearview AI | data protection | ethics | facial recognition | legislation | privacy | regulation

Comments