New European Parliament guidelines for AI and facial recognition invite moratorium consideration

A report released by the European Parliament (EP) on Wednesday calls for a new legal framework for the development and employment of artificial intelligence and surveillance-related face biometric applications, with definitions and ethical principles.

The report, which passed with 364 votes in favor, 274 against, 52 abstentions, calls for the establishment of an EU legal framework ensuring AI and related technologies to be developed in a human-centered way.

The document is divided into three main topics, respectively military use and human oversight, AI in the public sector, and mass surveillance and deepfakes.

“Faced with the multiple challenges posed by the development of AI, we need legal responses,” explained MEP Gilles Lebreton from the EP Identity and Democracy Group.

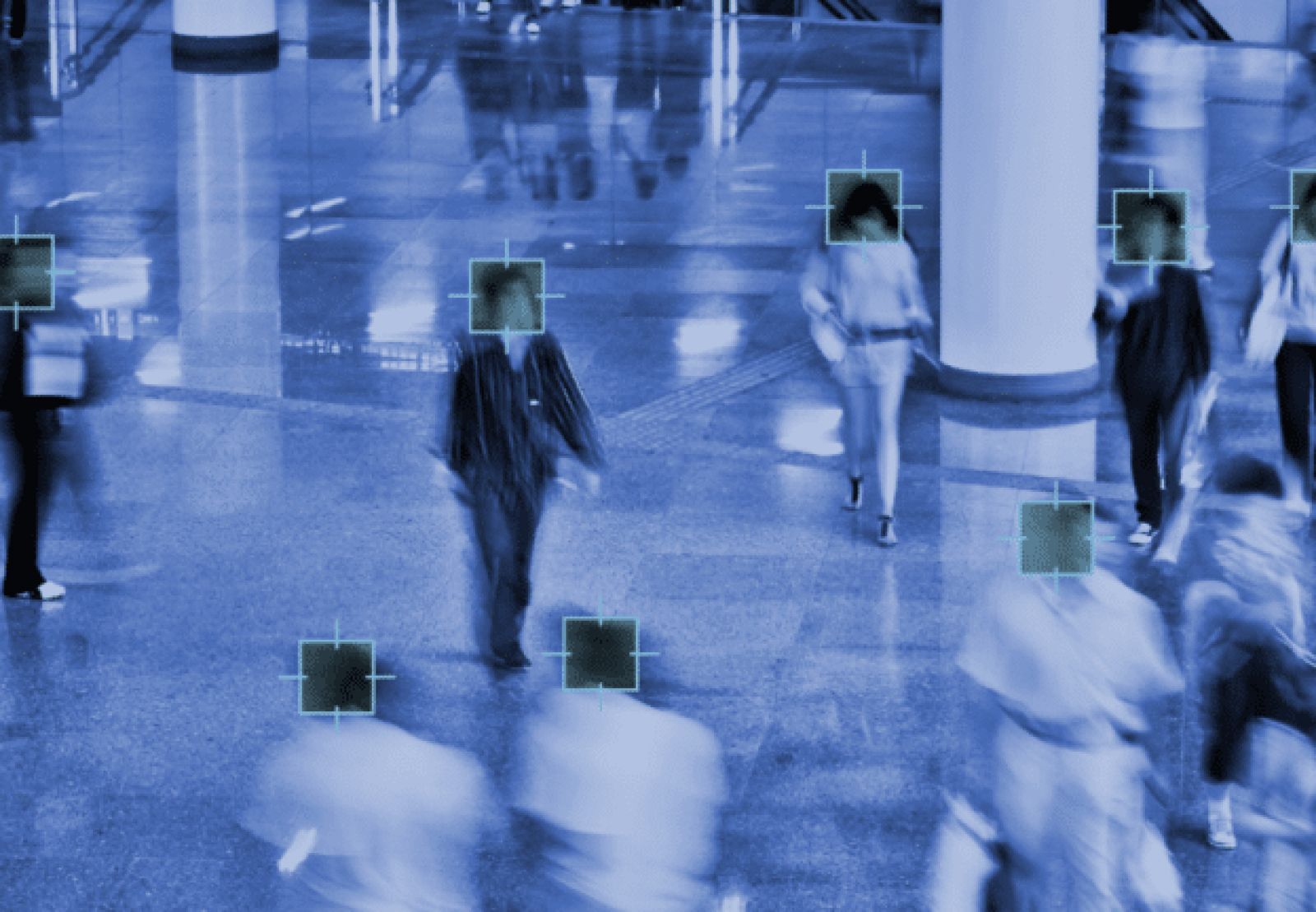

The section of the report on state authority “Invites the Commission to assess the consequences of a moratorium on the use of facial recognition systems, and, depending on the results of this assessment, to consider a moratorium on the use of these systems in public spaces by public authorities and in premises meant for education and healthcare, as well as on the use of facial recognition systems by law enforcement authorities in semi-public spaces such as airports, until the technical standards can be considered fully fundamental rights-compliant, the results derived are non-biased and non-discriminatory, and there are strict safeguards against misuse that ensure the necessity and proportionality of using such technologies.”

The first section of the report relates to the fact that human dignity and human rights should always be respected in all EU defense-related activities, particularly in regard to lethal autonomous weapon systems (LAWS), which the EP had condemned back in 2018.

Commonly called “killer robots,” these machines use deep neural network-powered algorithms to perform face recognition on their target, then fire without the need for human intervention.

The new guidelines aim at changing that by introducing a further element of human control based on principles of proportionality and necessity.

The second part of the report focuses on clarifying that AI systems deployed in public services, particularly healthcare and justice, should not replace human contact or lead to discrimination caused, for example, by facial recognition-related biases.

“To prepare the Commission’s legislative proposal on this subject,” Lebreton explained, “this report aims to put in place a framework which essentially recalls that, in any area, especially in the military field and in those managed by the state such as justice and health, AI must always remain a tool used only to assist decision-making or help when taking action.”

Finally, MEPs called for new guidelines related to human rights violations potentially caused by biometrics and other AI technologies in mass civil and military surveillance.

Dubbed “highly intrusive social scoring applications,” these practices include the deployment of facial recognition camera systems capable of identifying individuals in public, even without their consent.

Deepfake technologies are also mentioned in the report, with a warning that they might potentially destabilize countries, spread disinformation, and influence elections.

Article Topics

AI | biometric identification | biometrics | deepfakes | EU | facial recognition | law enforcement | military | privacy | regulation | video surveillance

Comments