Google allows biometrics for YouTube likeness detection to be used in AI training

In 2025, two of the biggest risks in tech are related. First is the deepfake threat: synthetic media is flooding the internet, eroding our capacity to distinguish what is real and true from what has been generated by a large language model or diffusion engine. Second is that any piece of data you put online now is destined to be sucked into an AI training dataset.

Google has apparently decided to combine the two problems into a hybrid concern. A report from CNBC quotes experts who say YouTube’s privacy policy technically allows it to use creators’ biometrics – ostensibly collected for the purpose of detecting and removing unauthorized and AI-manipulated use of their likeness – to train the company’s AI models.

YouTube says it never uses the biometrics and identity data it collects for the purpose of likeness protection to train its algorithms. But, according to the report, experts say that Google’s privacy policy leaves the door open for future misuse of creators’ biometrics, in stating that “public content, including biometric information, can be used to help train Google’s AI models and build products and features.”

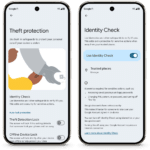

YouTube says it has no plans to change the privacy policy. But it tells CNBC that it is “considering ways to make the in-product language clearer.” The likeness detection tool, which will launch in January for creators in the YouTube Partner Program, is optional – but, as a YouTube spokesperson notes, it “does require a visual reference to work.”

YouTube has already faced data privacy concerns over its algorithmic age inference model, which guesses a user’s age based on their viewing patterns and behavior. As CNBC notes, as technology develops, the various interests of companies owned by Alphabet – namely, Google and YouTube – may diverge, as Google prioritizes training its models and YouTube aims to maintain trust with its creators and users.

As liveness detection was to 2024, likeness detection will be to 2026

The issue of likeness protection is one of the newest subcategories in biometrics, but it stands to become one of the most urgent. As the generative algorithmic software typically called AI becomes better and more freely available, it’s easier to steal and exploit someone else’s face online.

This is an obvious problem for celebrities, who have already started organizing to try and protect the value inherent in their appearance. Scarlett Johansson excoriated OpenAI for making its chatbot sound like her after she’d turned down the gig, and Bryan Cranston is leading a fresh charge to stop likeness theft from hobbling Hollywood.

The small screen, however, is the battleground, and YouTube creators must also deal with fake or generated images of themselves doing things online that put their reputations and credibility at risk. CNBC profiles Mikhail Varshavski, also known as Dr. Mike, a physician YouTuber who reacts to medical dramas and debunks medical myths, and worries about the trust he’s built up over years being compromised by AI deepfake versions of himself.

The issue is becoming pressing enough to spur efforts at regulation, notably in Denmark, which has legislated the right to own one’s likeness. And it is spurring innovation from startups. Companies such as Loti AI and Vermillo are offering services that find unauthorized uses of a client’s likeness, and give them the option of requesting removal. In April 2025, Loti closed a 16.2 million dollar Series A funding round, after opening up its product to the general public. A statement from CEO Luke Arrigoni says that “from deepfakes to unauthorized illicit content, these threats are no longer limited to celebrities.”

The deepfake threat is everyone’s problem now.

Article Topics

biometric data | biometrics | data privacy | deepfake detection | deepfakes | Google | likeness detection | training | YouTube

Comments